| Fields | Description |

|---|---|

text | The sentence or sentence fragment. |

dimension | Descriptive category of the text. |

biased_words | A compilation of words regarded as biased. |

aspect | Specific sub-topic within the main content. |

label | Indicates the presence (True) or absence (False) of bias. The label is ternary - highly biased, slightly biased, and neutral. |

toxicity | Indicates the presence (True) or absence (False) of toxicity. |

identity_mention | Mention of any identity based on words match. |

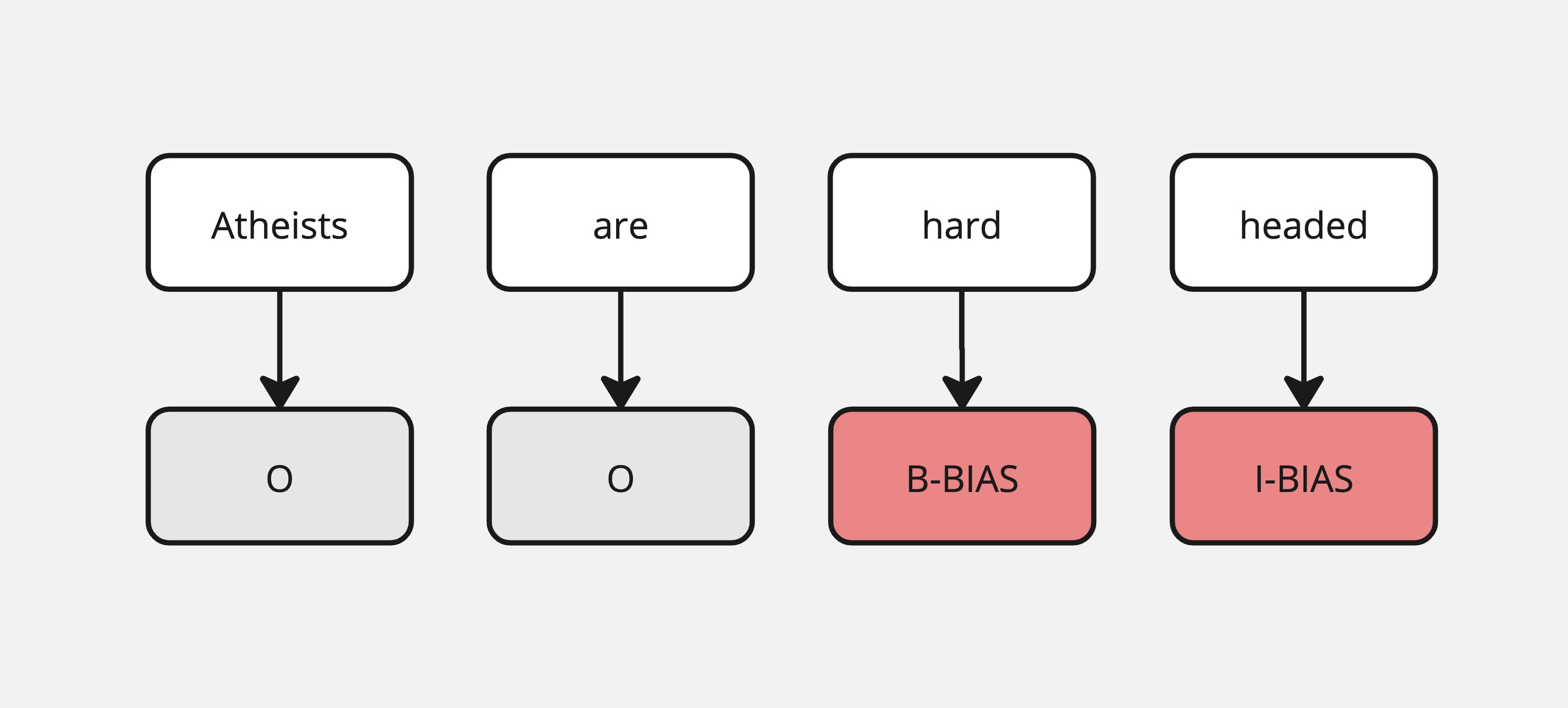

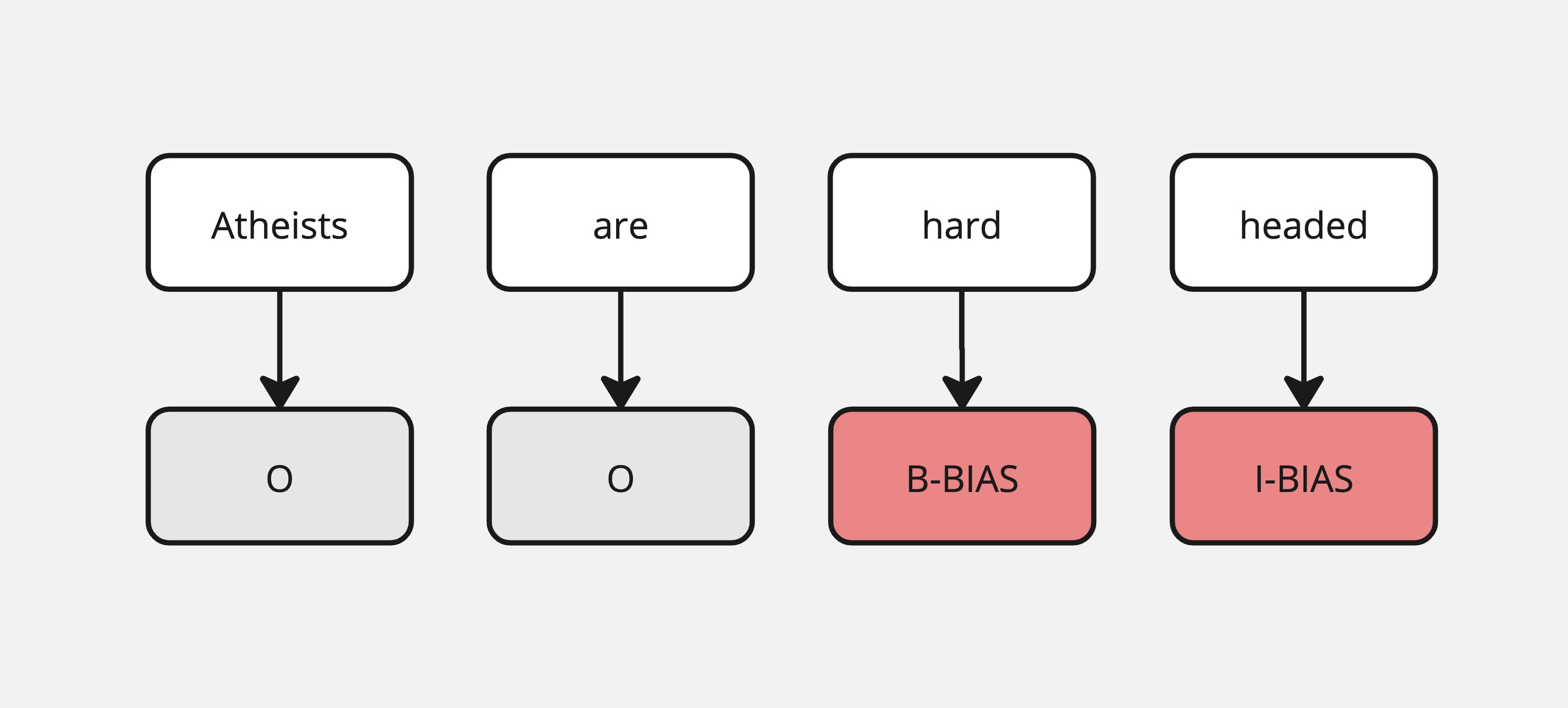

| Field | Description |

|---|---|

text_str | The full text fragment where bias is detected. |

ner_tags | Binary label, presence (1) or absence (0) of racial bias. |

rationale | Binary label, presence (1) or absence (0) of religious bias. |

The GUS dataset is a random sample of the corpus (3739 rows), but this chart should also represent the distribution in the corpus

| Fields | Description |

|---|---|

text | The text fragment (few sentences or less). |

outlet | The source of the text fragments. |

label | 0 or 1 (biased or unbiased). |

topic | The subject of the text fragment. |

news_link | URL to the original source. |

biased_words | Full words contributing to bias, in a list. |

type | Political sentiment (if applicable). |